Optimising Your eCommerce Site for JavaScript SEO

JavaScript is one of the major programming languages for building a website, and its capabilities cannot be overstated. However, JavaScript does face problems when it comes to SEO, which is why many website owners and SEO specialists find JavaScript difficult, or even intimidating. The good news is that JavaScript SEO offers a range of solutions to best optimise the JS content on your site.

In this blog, you will learn:

In fact, JavaScript is one of the three main programming languages that are essential when building a website, along with HTML and CSS. Where HTML is used to mark up the overall structure of a page and CSS is used for the design, JavaScript adds the necessary functionality that a website requires in order to respond to a user’s command.

In fact, JavaScript is one of the three main programming languages that are essential when building a website, along with HTML and CSS. Where HTML is used to mark up the overall structure of a page and CSS is used for the design, JavaScript adds the necessary functionality that a website requires in order to respond to a user’s command.

So if JavaScript is so important, why is its place in SEO debated, or seen with caution, or even worse, almost completely avoided? If JavaScript is such a necessary part of a website, then why does it conflict – or rather, supposedly conflict – with SEO?

In contrast to such views, it is not the case that JavaScript should be seen with caution or that it in any way conflicts with SEO. Rather, JavaScript comes with an array of benefits that site owners can take advantage of, particularly for eCommerce purposes.

It is true that the language has significant, innate problems when it comes to search engines, which web developers are often unaware of. It is also true that developers may choose to over-excessively rely on JavaScript, when the development of a specific aspect of the site could be done simply with HTML, such as internal linking or loading images. It is this lack of awareness about how to correctly implement JavaScript code to meet search engine standards, as well as the overreliance of JS, that could be seen as the root causes of concern within the SEO community.

However, is this concern really justified? JavaScript has its inherent issues, but since JS is so crucial in the development of any site, the optimisation of the language for SEO cannot be ignored. Also, many of its issues arise from the mishandling and incorrect implementation of JavaScript, which many developers may not know of, from an SEO point of view. If these mishandlings and incorrect implementations can be addressed, then the issues can be eliminated, thereby leaving the advantages of the language to remain.

So this blog post sets out to do just that – explain how to best optimise your site for JavaScript SEO, with a particular focus for eCommerce sites. But don’t worry, many of the recommendations here can be applied to your site even if you don’t have an eComm service. So for any businesses trying to sell their products/services online, this is the guide for you.

We will first begin by explaining what JavaScript is and how Google crawls and indexes JS content. We’ll then move the discussion on to what JavaScript SEO actually is and how it attempts to address the problems that arise from JavaScript, particularly for eCommerce brands. Once we have the necessary contextual knowledge of JavaScript and JavaScript SEO we’ll then finish with 10 versatile solutions to optimise the JavaScript on your eCommerce website.

So if JavaScript is so important, why is its place in SEO debated, or seen with caution, or even worse, almost completely avoided? If JavaScript is such a necessary part of a website, then why does it conflict – or rather, supposedly conflict – with SEO?

In contrast to such views, it is not the case that JavaScript should be seen with caution or that it in any way conflicts with SEO. Rather, JavaScript comes with an array of benefits that site owners can take advantage of, particularly for eCommerce purposes.

It is true that the language has significant, innate problems when it comes to search engines, which web developers are often unaware of. It is also true that developers may choose to over-excessively rely on JavaScript, when the development of a specific aspect of the site could be done simply with HTML, such as internal linking or loading images. It is this lack of awareness about how to correctly implement JavaScript code to meet search engine standards, as well as the overreliance of JS, that could be seen as the root causes of concern within the SEO community.

However, is this concern really justified? JavaScript has its inherent issues, but since JS is so crucial in the development of any site, the optimisation of the language for SEO cannot be ignored. Also, many of its issues arise from the mishandling and incorrect implementation of JavaScript, which many developers may not know of, from an SEO point of view. If these mishandlings and incorrect implementations can be addressed, then the issues can be eliminated, thereby leaving the advantages of the language to remain.

So this blog post sets out to do just that – explain how to best optimise your site for JavaScript SEO, with a particular focus for eCommerce sites. But don’t worry, many of the recommendations here can be applied to your site even if you don’t have an eComm service. So for any businesses trying to sell their products/services online, this is the guide for you.

We will first begin by explaining what JavaScript is and how Google crawls and indexes JS content. We’ll then move the discussion on to what JavaScript SEO actually is and how it attempts to address the problems that arise from JavaScript, particularly for eCommerce brands. Once we have the necessary contextual knowledge of JavaScript and JavaScript SEO we’ll then finish with 10 versatile solutions to optimise the JavaScript on your eCommerce website.

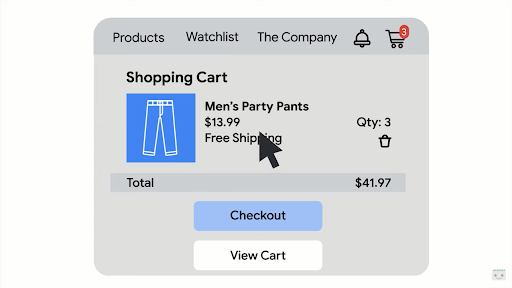

With JavaScript’s capabilities to make a site so interactive and functional, like adding in a shopping basket notification or personalise the page in many other ways, It is now becoming the case more and more, that websites are built and powered entirely by JavaScript, using their attributed frameworks, such as React, Vue.js and Angular (with React being the most common framework). These frameworks can be used not only to power mobile and web apps but can also be used to create single-page applications and multiple-page applications too (more on what single-page applications are later on).

I mentioned briefly how JavaScript is now the sole element powering a website and other web applications, using frameworks like React, let’s discuss further how JavaScript is used to power websites. This will continue to help us understand why JavaScript SEO is such an essential element of any technical SEO strategy nowadays since most websites are either powered by JavaScript or are JavaScript heavy.

With JavaScript’s capabilities to make a site so interactive and functional, like adding in a shopping basket notification or personalise the page in many other ways, It is now becoming the case more and more, that websites are built and powered entirely by JavaScript, using their attributed frameworks, such as React, Vue.js and Angular (with React being the most common framework). These frameworks can be used not only to power mobile and web apps but can also be used to create single-page applications and multiple-page applications too (more on what single-page applications are later on).

I mentioned briefly how JavaScript is now the sole element powering a website and other web applications, using frameworks like React, let’s discuss further how JavaScript is used to power websites. This will continue to help us understand why JavaScript SEO is such an essential element of any technical SEO strategy nowadays since most websites are either powered by JavaScript or are JavaScript heavy.

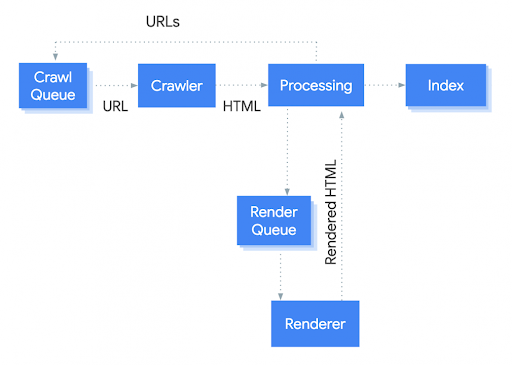

Finally, before moving on to discuss what JavaScript SEO is, and all of its implications, it is important to know how a search engine will crawl and index JavaScript. This last item of knowledge will help us to understand how a search engine interacts with JavaScript and why JavaScript SEO is much needed.

Finally, before moving on to discuss what JavaScript SEO is, and all of its implications, it is important to know how a search engine will crawl and index JavaScript. This last item of knowledge will help us to understand how a search engine interacts with JavaScript and why JavaScript SEO is much needed.

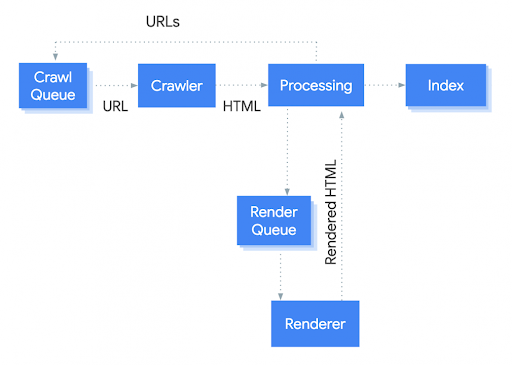

To better understand the process, let’s compare it to how Google crawls an HTML page.

First of all, the process begins with HTML being downloaded, links too are extracted and CSS files are downloaded as well. These elements are then sent off to Google’s aptly named indexer, Caffeine, which then indexes the page. Sounds simple enough.

You can read about Google’s Caffeine indexer in more detail, from Google’s official documentation here.

Similar to an HTML page being crawled and indexed, the process for crawling JavaScript content is also alike. The process begins with the HTML file being downloaded. The links are generated by JavaScript, however, the links cannot be extracted so easily. So, instead what Google does is it downloads the page’s JavaScript elements and uses Caffeine’s Web Rendering Service to index the content. The Web Rendering Service can then index the content and links.

Clearly, the JavaScript process is more lengthy and complicated, with more resources and steps involved, making it a much more difficult procedure for Google.

In contrast, as you can see, crawling an HTML page is much more straightforward and simple. All Google has to do is download the HTML from the page, extract the links and finally crawl them. However, the case is entirely different when JavaScript is at hand. Google must first render JavaScript and then extract the links. This is the main, key difference. Therefore, there is a much greater delay in the process. It is this delay, that affects crawlability and other aspects, such as page speed, so drastically.

With this in mind, SEO professionals, especially those of us working with eComm brands, need to know how to best optimise JavaScript for search engines, to avoid such JS issues, considering that most of our clients’ sites are built with and powered by JavaScript. This is where JavaScript SEO comes into the picture.

To better understand the process, let’s compare it to how Google crawls an HTML page.

First of all, the process begins with HTML being downloaded, links too are extracted and CSS files are downloaded as well. These elements are then sent off to Google’s aptly named indexer, Caffeine, which then indexes the page. Sounds simple enough.

You can read about Google’s Caffeine indexer in more detail, from Google’s official documentation here.

Similar to an HTML page being crawled and indexed, the process for crawling JavaScript content is also alike. The process begins with the HTML file being downloaded. The links are generated by JavaScript, however, the links cannot be extracted so easily. So, instead what Google does is it downloads the page’s JavaScript elements and uses Caffeine’s Web Rendering Service to index the content. The Web Rendering Service can then index the content and links.

Clearly, the JavaScript process is more lengthy and complicated, with more resources and steps involved, making it a much more difficult procedure for Google.

In contrast, as you can see, crawling an HTML page is much more straightforward and simple. All Google has to do is download the HTML from the page, extract the links and finally crawl them. However, the case is entirely different when JavaScript is at hand. Google must first render JavaScript and then extract the links. This is the main, key difference. Therefore, there is a much greater delay in the process. It is this delay, that affects crawlability and other aspects, such as page speed, so drastically.

With this in mind, SEO professionals, especially those of us working with eComm brands, need to know how to best optimise JavaScript for search engines, to avoid such JS issues, considering that most of our clients’ sites are built with and powered by JavaScript. This is where JavaScript SEO comes into the picture.

Google does not index JavaScript or CSS files and show them in search results. This code is simply to help Google render your web page.

There is no reason for you to block JavaScript & CSS resources from Google. By doing so, you could prevent your content from being rendered and thereby, most important of all, from being indexed.

Google does not index JavaScript or CSS files and show them in search results. This code is simply to help Google render your web page.

There is no reason for you to block JavaScript & CSS resources from Google. By doing so, you could prevent your content from being rendered and thereby, most important of all, from being indexed.

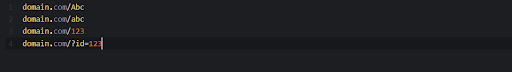

Much like the issue with robots.txt file, this issue also has a simple solution. You just need to select the iteration you want to be indexed and set a canonical tag.

Much like the issue with robots.txt file, this issue also has a simple solution. You just need to select the iteration you want to be indexed and set a canonical tag.

To find out more about internal linking, read our blog post, from our senior strategist, Callum Lockwood, on internal linking strategies.

To find out more about internal linking, read our blog post, from our senior strategist, Callum Lockwood, on internal linking strategies.

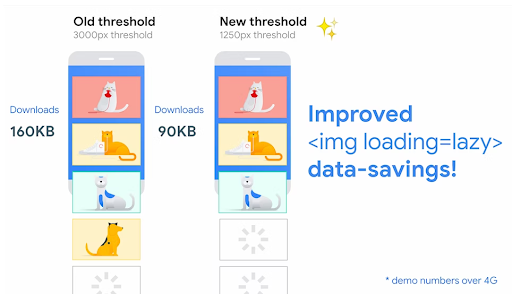

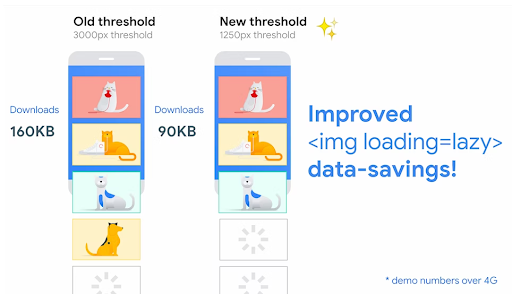

Rather, opt for using the intersectionObserver API, which triggers a callback when any observed element becomes visible. A callback is more preferable than the scroll event, as it is more flexible and robust, and as mentioned, occurs when any element is crawled by a bot. This makes it a much more compatible option for Googlebot.

There is also the ability to lazy load images within the browser directly. This is referred to as browser level lazy loading or native lazy loading. Chromium based browsers such as Google Chrome, Microsoft Edge, Opera (as well as Firefox) support native loading within the browser directly.

Rather, opt for using the intersectionObserver API, which triggers a callback when any observed element becomes visible. A callback is more preferable than the scroll event, as it is more flexible and robust, and as mentioned, occurs when any element is crawled by a bot. This makes it a much more compatible option for Googlebot.

There is also the ability to lazy load images within the browser directly. This is referred to as browser level lazy loading or native lazy loading. Chromium based browsers such as Google Chrome, Microsoft Edge, Opera (as well as Firefox) support native loading within the browser directly.

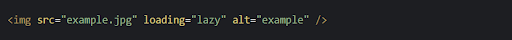

You can find an example code snippet in the image below, illustrating browser level lazy loading:

You can find an example code snippet in the image below, illustrating browser level lazy loading:

Lazy loading images will be exceptionally helpful for your eCommerce website. For example, on a category page with multiple rows of products, implementing lazy loading, as part of your page’s code, will help to render the product images that much more efficiently, as you scroll down the page. This will directly result in faster site performance for users and also search engine crawl bots.

You can read about lazy loading even further here, in this blog post by web.dev.

Lazy loading images will be exceptionally helpful for your eCommerce website. For example, on a category page with multiple rows of products, implementing lazy loading, as part of your page’s code, will help to render the product images that much more efficiently, as you scroll down the page. This will directly result in faster site performance for users and also search engine crawl bots.

You can read about lazy loading even further here, in this blog post by web.dev.

- What is JavaScript?

- How does Google crawl and index JavaScript?

- What is JavaScript SEO?

- How to optimise your JavaScript eCommerce site for SEO

What is the Status of JavaScript on the Web?

The majority of websites on the internet use some degree of JavaScript to add interactivity, perform various functions, and fetch data from a backend database. It would be difficult, almost impossible, to perform such tasks without the use of this programming language on the web.

Image credit – Google

Image credit – Google

What is JavaScript?

Since JavaScript is such an extensive topic to discuss, I’ll simply describe what it is in general terms, with reference to eCommerce (if you want to read in more depth, you can use this resource by MDN). As mentioned above, JavaScript is the main programming language used to make a website come to life. How we as users interact on a site and carry out various functions is all done by JS. To highlight a few examples of what JavaScript can do, it can be used to:- personalise your website

- deliver notifications

- load new content as you scroll down a page

- and much, much more!

Image credit – Google

What are JavaScript Powered Websites?

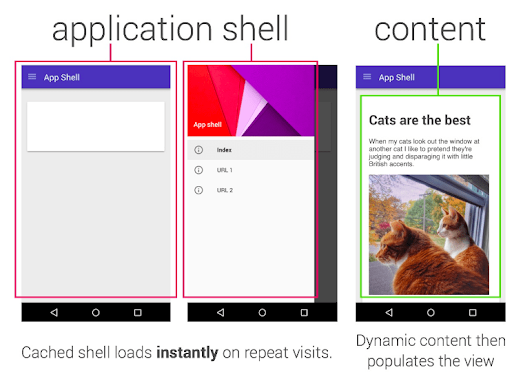

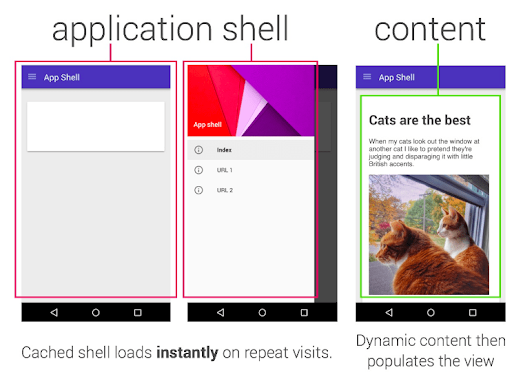

As previously mentioned, many websites – particularly in the eCommerce industry – are powered by JavaScript, or are JavaScript heavy. What this essentially means is that the website or application, is not simply structured with HTML and then made interactive for the user, as with the examples mentioned above, rather it means the core or primary content of a site is inserted into the DOM (document object model) with JavaScript code. This means that JavaScript code must be crawlable in order for the content to be discovered at all.

Image credit – developer.chrome

How Does Google Crawl and Index JavaScript?

We now know what JavaScript is and the role it plays on the web today. So, let’s take a look at how Google actually crawls and indexes JavaScript. The first item to understand in how Google processes JavaScript is that it occurs in three main parts: crawling, rendering and finally, indexing. Google has produced a visual representation of this process, which can be seen below:

Image credit – Google

What is JavaScript SEO?

JavaScript SEO is a branch of technical SEO, that specifically focuses on optimising JavaScript on a site to be more indexable, crawlable and search-engine friendly. This sounds a lot like any other strand of technical SEO. However, since JavaScript presents its own band of challenges and is so widely used on the web, some websites may be considered JavaScript heavy, and rich with JavaScript related problems, which is why this sub-field of technical SEO exists to tackle this problem.What are the Problems with JavaScript?

One of the most significant problems that websites run into with JavaScript is for their content to not be rendered, crawled or indexed by Google. If this is the case, then your most important pages will be missed out of Google’s index and will not be able to rank for a user’s search query. This is even more crucial for eCommerce websites. It would mean that pages with the most best-selling products or promotion pages (such as Black Friday or Christmas sales) would be completely left out of the SERPs. There wouldn’t even be a chance for users to discover your page, or brand, in the first place. This would have a detrimental effect on revenue targets as well as hamper your presence as an online retailer. Aside from this most common issue of JavaScript content not being crawled or indexed correctly, there are a number of other problems that JavaScript SEO tries to resolve. Here are some other objectives JavaScript SEO sets out to achieve, to list a few:- Ensuring content uploaded using JavaScript is rendered, crawled and indexed correctly

- Carrying out the diagnosis and then troubleshooting of ranking issues for websites, as well as single-page applications

- Making sure priority pages on a website can be discovered by search engines via internal linking best practices

- Optimising speed and load times to ensure JavaScript content is rendered efficiently, which in turn will provide a smooth user experience

Why SEO’s Need to Work with Developers

One of the main issues that JavaScript SEO attempts to resolve is the crawling, rendering and indexing of JavaScript content. An issue like this typically can come about due to a mishandling of JS on the site. Therefore, it is important for us as SEOs to collaborate with developers and help them to understand errors that may arise from using JavaScript without bearing SEO in mind. A developer may look at fulfilling a task by using the various packages JavaScript has to offer. In effect, to them, they are finding not only the best solution but also the easiest one to resolve the task as efficiently and effectively as possible. However, this is where the problem also lies. What may seem to be the easiest way to complete a task, using JavaScript, might not be a way that is indexable and crawlable by search engines. Whereas the use of HTML might not be as appealing, it would be more compatible with SEO, as Googlebot, or a crawler from any other search engine, will be able to successfully read and then render the page to the user. So this effectively is guidance on the best practices that developers should consider when using JavaScript to be SEO friendly and as a result, user friendly as well.What Role Does JavaScript SEO Play on eCommerce Sites?

As discussed earlier, eCommerce brands rely heavily on JavaScript for their websites to function seamlessly. You can commonly find brands make use of JavaScript to upload their products onto category pages as well as dynamically update the stock on their site, which will help retailers since their stock is in a constant state of fluctuation. So, if JavaScript isn’t optimised for SEO correctly, it can cause major problems such as priority, category pages not being crawled or indexed properly, which would then not rank, ultimately affecting your organic performance. So far we have discussed what JavaScript is, its purpose in the development of eCommerce websites, how Google crawls and indexes JavaScript, as well as the many problems Google, and not to forget online retailers, can have with the language. Following this, we have continued the discussion to understand how JavaScript SEO has come forward to counter such problems, in particular, how it can counter such issues in the eCommerce industry. Now we know about the importance of JavaScript for eCommerce SEO, let’s look at how to optimise it!How to Optimise Your JavaScript eCommerce Site for SEO

Now, before getting started on our 10 tips for JavaScript SEO, it is important to remember that many of the following recommendations will not only apply to eCommerce sites but also to any site in general. We can also categorise the forthcoming recommendations into four groups, which are:- JavaScript SEO for Rendering, Crawling & Indexation

- JavaScript SEO for Technical SEO

- JavaScript SEO for Page Speed Performance

- Finally, JavaScript SEO for On-Page SEO

JavaScript SEO for Rendering, Crawling & Indexation

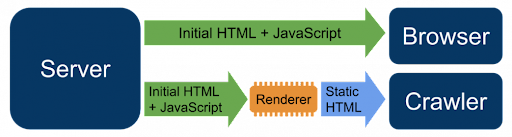

1. Ensure the Use of Dynamic Rendering for JavaScript Content

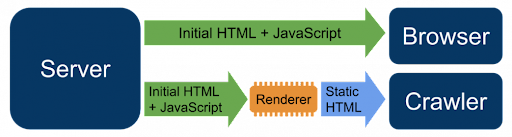

To start, it is important to know straight away that the way your eComm site renders code is paramount to how Google will index your JavaScript content. Therefore, one of the first items to ensure in your JavaScript SEO efforts is that your site makes use of dynamic rendering to process your code. To truly understand dynamic rendering, i’ll need to first provide some context and discuss the other two types of rendering options: server-side rendering (SSR) and client-side rendering (CSR). Let’s begin by discussing server-side rendering first. What is Server-Side Rendering? Server-side rendering is when the JavaScript content on a page is rendered on the server and then the rendered HTML page is sent to the client, which can be, for example, the browser or Googlebot. The way a server-side rendered page is crawled is the same as any other HTML page. In this case, there should not be any JavaScript issues. However, the main issue that arises here is server-side rendering can be challenging and complicated for developers. As SEOs, we want to keep our recommendations in line with Google guidelines as well as work collaboratively with developers and help them to create an SEO friendly website. Therefore, SSR may not be the easiest option to use from a development point of view. What is Client-Side Rendering? In contrast, client-side rendering is the opposite of server-side rendering. With this option, the page, and of course the JavaScript on the page, is rendered by the client, such as the browser, or rather Googlebot itself. In this case, many issues can arise when trying to render JavaScript. The client will have trouble rendering, crawling and indexing JavaScript content. What is Dynamic Rendering and why is it Better for JavaScript SEO? Dynamic rendering is an alternative solution to server-side rendering and client-side rendering, introduced by Google’s John Mueller, at Google I/O 2018. This option allows you to deliver content to the user, via JavaScript code that has been generated in the browser. However, only a static version is sent to Googlebot. In other words, client-side rendered content is sent to the user and server-side content is sent to the search engine bot. This is the preferred method for rendering JavaScript content and should be used for JavaScript heavy sites, such as eCommerce websites. A visual representation, by Google, can be found below:

Image credit – Google

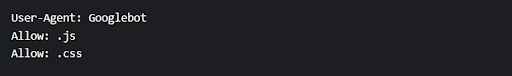

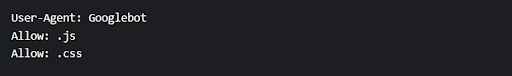

2. Ensure Google has Access to your JavaScript Content

Another way to optimise your site for JavaScript is by allowing Google to render and download your JavaScript content in your robots.txt file. Quite often, what can happen is your JavaScript content may be blocked in your robots.txt file by accident. If this is the case, Google won’t be able to access and download your content. If Google is blocked, then your content will not be rendered. As a consequence, your content will not be indexed. Blocking javascript content does not stop your page from being indexed, but it does mean your content will not be rendered, which is just as much of an issue. A simple way to solve this issue is by adding a very basic item of code, which can be found below, to you’re robots.txt: Google does not index JavaScript or CSS files and show them in search results. This code is simply to help Google render your web page.

There is no reason for you to block JavaScript & CSS resources from Google. By doing so, you could prevent your content from being rendered and thereby, most important of all, from being indexed.

Google does not index JavaScript or CSS files and show them in search results. This code is simply to help Google render your web page.

There is no reason for you to block JavaScript & CSS resources from Google. By doing so, you could prevent your content from being rendered and thereby, most important of all, from being indexed.

JavaScript SEO for Technical SEO

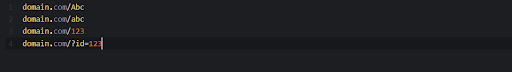

3. Ensure JavaScript Based Duplicate Content is Removed

When using JavaScript, it may be the case that multiple URLs will be present for the same web page. Typically the reason behind multiple URLs is simply down to capitalisation, or non-capitalisation of the URL slug, the use of number based IDs as well as parameters with IDs also. This can, of course, cause issues regarding duplicate content. Examples of such duplicated content can be seen below: Much like the issue with robots.txt file, this issue also has a simple solution. You just need to select the iteration you want to be indexed and set a canonical tag.

Much like the issue with robots.txt file, this issue also has a simple solution. You just need to select the iteration you want to be indexed and set a canonical tag.

4. Optimise Your Error Pages

The fourth optimisation tactic is to optimise your error pages. This can be an issue since JavaScript frameworks do not exist server-side. This means that if there is any problem in locating a URL, the site will not be able to return an error page, such as a 404. So, when it comes to optimising error pages there are two main solutions:- The first is to use JavaScript to redirect to a page that does return a 404 status code.

- And the other is to add a no index tag to the page as well as a message to go with “this page could not be found.” Of course, it’s up to you how creative you want to be with your error message. It is important to note that this error page will be treated as a soft 404 as the actual status code will be a 200.

5. Avoid the Excess Use of JavaScript Files on your Website

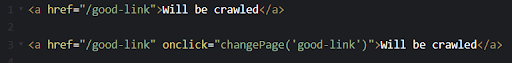

Another best practice to keep in mind is to avoid or reduce the mass spread of JavaScript files on your website. Since JavaScript is such a preferable choice for development, the production of multiple JavaScript files can become widespread. This is particularly the case if each interactive component of the website is stored in a separate JavaScript file. Thus, the management of all of these JS files can be difficult. Therefore, reducing the number of individual JavaScript files produced, which a browser will then need to download, will directly benefit site performance, and of course your rankings on search results pages. One of the best ways to identify if you have too many JavaScript files on your site is by using the PageSpeed insights tool, by Google. On the surface, this tool is mostly used for analysing site speed, but it can even play a significant role in optimising the JavaScript on your website. Google suggests, that the most pragmatic solution would be to merge several small JavaScript files into a few, larger files. These fewer, but larger JS files can therefore be downloaded at the same time as one another, making the process of rendering the page, adding interactivity, and many more functions, that much more efficient.6. Avoid Using JavaScript in Your Internal Linking

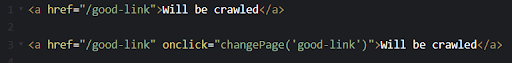

JavaScript can also cause issues for search engines when crawling internal links. Most commonly JavaScript will be used by developers when creating buttons or other interactive on-click elements. This is particularly an issue, since one of the ways Google discovers new pages – as well as pass page rank to your most significant pages on your website – is via your internal linking setup. Thus, using JavaScript as part of your internal linking implementation can cause important pages on your site to be left undiscovered and, ultimately, not rank on SERPs. The solution is to only use HTML anchor tags, with href attributes, as well as descriptive anchor text, to clearly inform the user and search engines where the link leads, when including internal links in your site. Example code can be found in the below image: To find out more about internal linking, read our blog post, from our senior strategist, Callum Lockwood, on internal linking strategies.

To find out more about internal linking, read our blog post, from our senior strategist, Callum Lockwood, on internal linking strategies.

7. Ensure the Use of Pagination

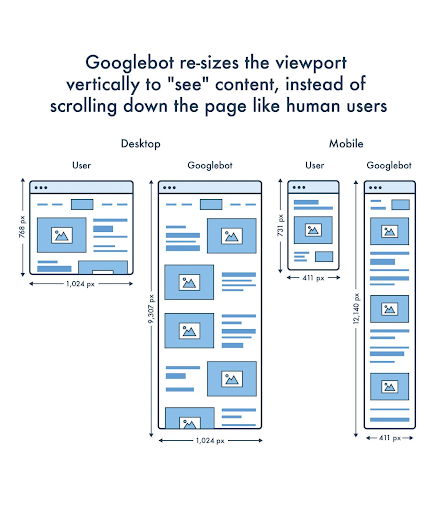

The use of pagination is particularly important for eCommerce websites, especially if you have a large catalogue of products displayed on a page. While endless scrolling down a page may appear to be useful from a user perspective, it is not the most friendly when it comes to SEO. This is because search engine bots would not be able to scroll down the page the same way a user would be able to, or click on a button which says “view more”, continuously, to access additional content. Sooner or later Googlebot, or any other search engine bot, will reach a specific limit and stop crawling your page. Thus, these pages would be neglected in the search results, since they would not be crawled as often. This would directly impact your rankings on search result pages in a negative way. Therefore, pagination is the solution and the best way to implement this is via href links, which will allow Google to access the second page of your paginated product listing.JavaScript SEO for Page Speed Performance

8. Lazy Loading Your Images is a Must

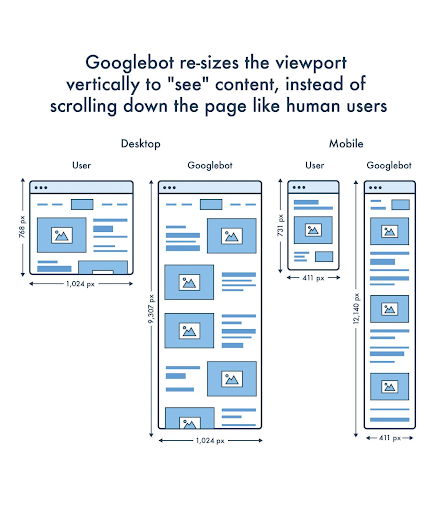

JavaScript can also affect the crawlibility of images that you want to lazy load. Lazy loading is an effective way to improve the rendering speed of images and optimise for Core Web Vitals, however, it needs to be done correctly, and JavaScript can play a negative role in hampering its correct execution. Googlebot obviously supports lazy loading. However, as mentioned earlier, when discussing pagination, Google will not scroll down a page the same way a user will. Rather, it will simply resize its own virtual viewport to be long enough to view and crawl the content of the web page. This becomes an issue when lazy loading images. What this means is that Google will not be able to trigger what is known as the “scroll” event, which is when a user scrolls down a page, the images/content are loaded, however, since Google changes the viewport of its crawler to see the content all at once, that scroll action is never enabled, therefore, the images that would appear gradually would never emerge either. You can also see how Googlebot views content on a page differently to a user in the below diagram:

Image credit – Google

Image credit – Web.dev

9. Optimise Page Speed

As mentioned, JavaScript can also affect page speed. This is an issue as it can cause a poor user experience, and if the page takes too long to load, then Googlebot will not be able to crawl the page. Also, if a page takes too long to load, then users will eventually leave the site. If Google sees users continuously leaving your site this can have a negative effect on how your site ranks on Google SERPs. There are a number of solutions that you can use to overcome this problem. Here are just a few:- Firstly, you can minify the use of JavaScript

- Secondly, defer non-critical JavaScript only after the main content of the page has been rendered in the DOM.

- Thirdly, inline your critical JavaScript

- Finally, you can serve JavaScript in smaller payloads

JavaScript SEO for On-Page SEO

10. Optimise Meta Data

This is of course an obvious one, but if your site is built using JavaScript, there are a few issues to look out for regarding your on-page SEO. It goes without saying, but make sure your on-page SEO is optimised. This is particularly an issue with SPAs (single page applications), which tend to use a JavaScript router package, such as react-router, to handle the changing of metadata, when going between pages, or what is called “views” on SPAs. Typically, this is done with a Node.js package, for example, react-meta-tags. Essentially, what happens is when users and search engine crawlers follow the links to URLs on a website built using the React framework, they are not actually being served various static HTML files. Instead what’s happening is that the components, such as the headers, footers and body content on the root ./index.html file are just being reshuffled and organised to present different content to the user. Hence the name ‘single page application’. In other words, rather than serving multiple pages to the user, the contents of the current page are simply reorganised to be displayed differently. Examples of single-page applications include sites like Netflix, Gmail and PayPal. This is where ensuring your on-page SEO is optimised comes into play. Often with single-page applications, the same metadata may be reused for different pages, or rather “views”, as you might call them. Therefore, when coming across issues like this, it is important to use the right JavaScript package to ensure unique page titles, descriptions and h1s are served to each view.Final Thoughts

So to conclude, JavaScript is one of the most versatile programming languages in the web development arena and is responsible for the functionality of any website. With many of its natural capabilities, the language is ideal for eCommerce businesses. However, without proper care and implementation, the language can pose a range of highly problematic concerns, particularly not being able to be crawled or indexed by Googlebot. It is for this reason that JavaScript has been viewed with a certain amount of fear by the SEO community. This is where JavaScript SEO has found its place in the technical SEO initiative. 10 tips have been outlined in this blog post to optimise JavaScript for eCommerce platforms and you can find a quick recap below:- Ensure the use of dynamic rendering

- Ensure Google can properly access your JavaScript content

- Try to stay away from producing an excess amount of JavaScript files on your site

- Keep away from using JavaScript in your internal linking strategy and only implement HTML

- Use pagination to help Googlebot crawl your products rather than continuous scrolling

- Make sure to use lazy loading in the browser to improve page speed

- Finally, optimise your pages’ metadata