The Best Practices of SEO Colour Variations for eCommerce Site

URLs colour variations are crucial topics that should be decided before setting up the website’s structure. While defining a good user experience is essential, you should also keep SEO best practices in mind. Primarily due to the fact most eCommerce sites have millions of product pages.

In this article, we’ll discuss the best practices of SEO colour variations for eCommerce sites. If you are thinking of changing your website structure or if you want to confirm if you’re using the proper method, we’ll help you to find out!

Before going into the post, it is vital to understand precisely what URL parameters are and how we should use them. Here is an overview of the following article:

If query strings are not set up efficiently, multiple versions of a single piece of content can be crawled and indexed. This means endless combinations of parameters can create thousands of URL variations out of the same content and generate duplicate content. With this, we will show you the most common SEO issues caused by URL parameters that can affect your site’s ranking.

If query strings are not set up efficiently, multiple versions of a single piece of content can be crawled and indexed. This means endless combinations of parameters can create thousands of URL variations out of the same content and generate duplicate content. With this, we will show you the most common SEO issues caused by URL parameters that can affect your site’s ranking.

This approach works well with smaller e-commerce websites since you’ll need to produce unique content for each “filter” page. The combinations of filters from your navigation often results in thin content issues, especially if you offer multi-select filters.

In short, if you want your parameters to be indexed, use static URL paths. However, we suggest that not relevant parameters be implemented as query strings. (Example, tracking, reordering or paginating parameters)

This approach works well with smaller e-commerce websites since you’ll need to produce unique content for each “filter” page. The combinations of filters from your navigation often results in thin content issues, especially if you offer multi-select filters.

In short, if you want your parameters to be indexed, use static URL paths. However, we suggest that not relevant parameters be implemented as query strings. (Example, tracking, reordering or paginating parameters)

So, if a specific colour variation is essential to the user’s intent, it makes sense to create a different URL for each colour.

So, if a specific colour variation is essential to the user’s intent, it makes sense to create a different URL for each colour.

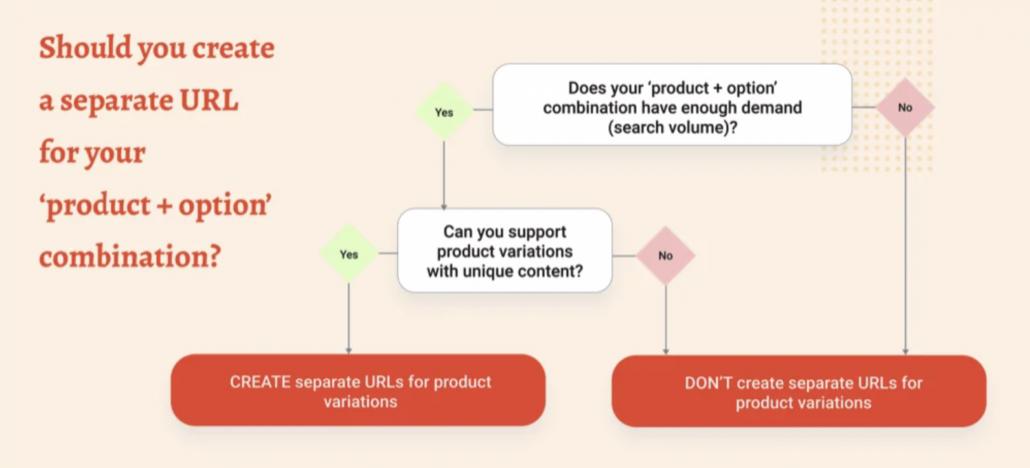

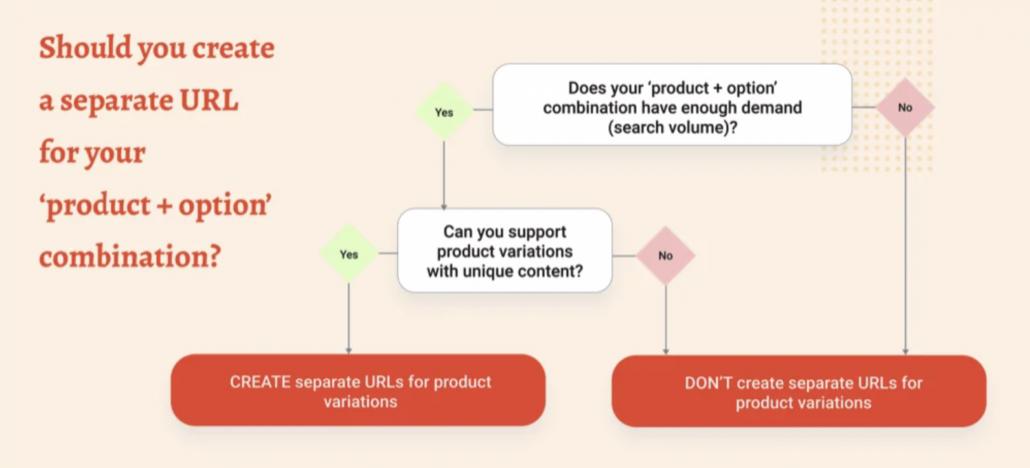

Think about all the components discussed in this post, and choose the strategy that works best for your eCommerce store. This graph’s a great resource when it comes to deciding whether you need your product variation on a separate URL.

Think about all the components discussed in this post, and choose the strategy that works best for your eCommerce store. This graph’s a great resource when it comes to deciding whether you need your product variation on a separate URL.

- What are URLs parameters?

- The most common use cases

- SEO issues with URL parameters

- SEO solutions with pros & cons

- How to decide which SEO tactics to implement?

- The Best SEO Practices for Color Variations

What are URLs parameters?

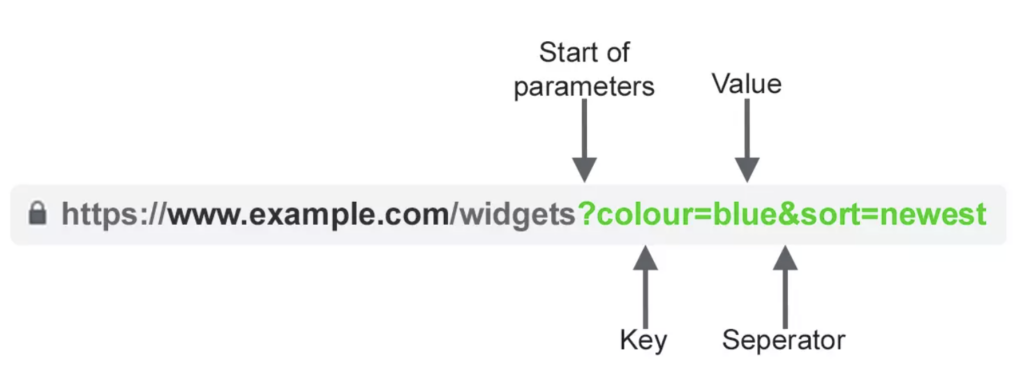

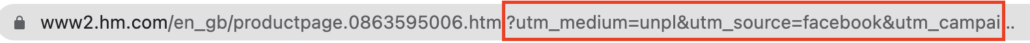

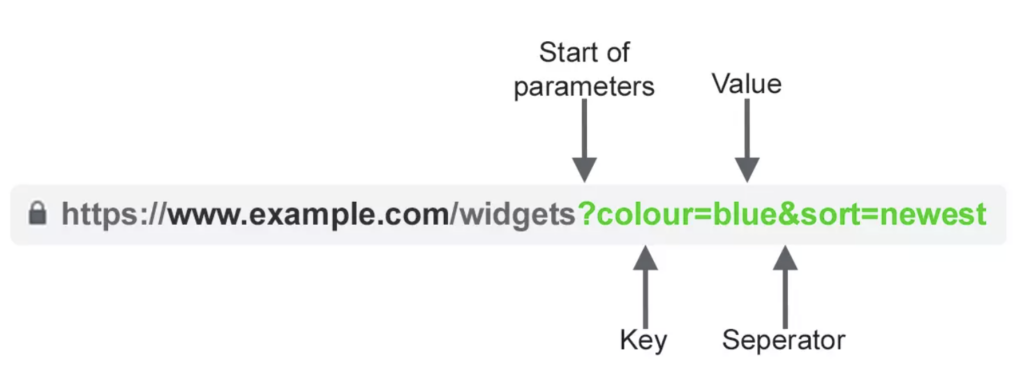

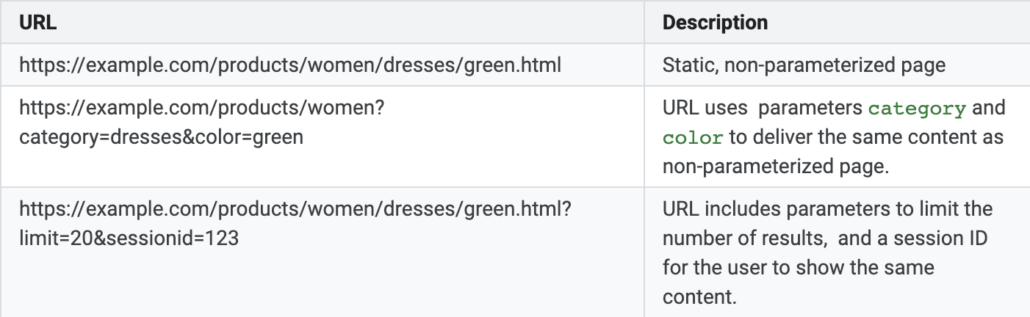

URL parameters (or “query strings”) are pieces of information inserted at the end of URLs. They are commonly used to help filter or sort content on a page (e.g. colours, size or popularity of the products) and make it easier to navigate in an eCommerce store. The query strings allow users to order a page according to specific filters and find a particular element. (e.g. “size 6 UK” or “blue dresses”). Additionally, they can track information on your website and determine where traffic comes from. By monitoring the user’s click, you can determine whether they come from a social media campaign, newsletters or ad campaigns. The URL query parameters can be identified with a question mark (?), and then they are made of a key and a value, separated by an equal sign (=). A common mistake is to insert multiple values in the same key. If you want to use various parameters, they should be separated by an ampersand (&). This is an example of how URL parameters look like:

Source: Search Engine Journal

To learn further about URL parameters, we found this guide SEO-Friendly URL Structures and Parameters helpful.The most common use cases for parameters

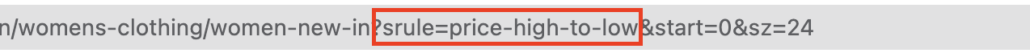

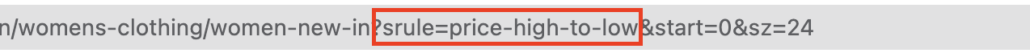

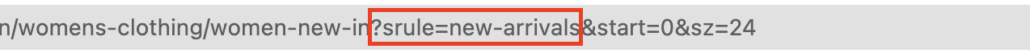

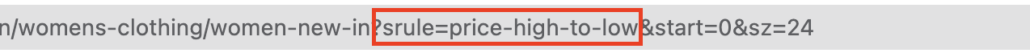

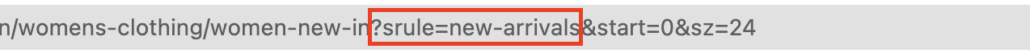

There are two types of query strings. The first is when you want to modify content and the second is when you want to track something. The most use cases for parameters are:- Reordering – used for ordering products according to specific filters such as the lowest price, high rated product or newest products. e.g. sort=lowest-price, order=highest-rated or sort=new-arrivals

- Filtering – used to filter for different values, for a specific colour or a specific price range. e.g. type=widget, colour=blue or price-range=20-50

- Identifying – used to sort pages by type, category or size. e.g. product=small-blue-widget or categoryid=124

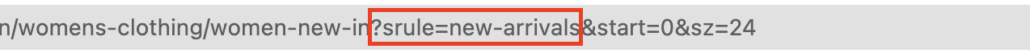

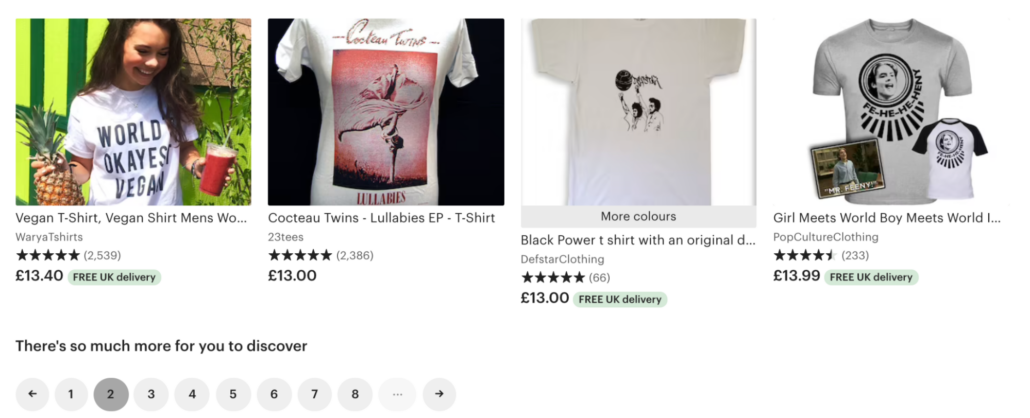

- Paginating – used to divide content into pages for online stores to avoid infinite scrolling. e.g. page=2 or viewItems=10-30 Here is an example of a pagination parameter after going to the second page of a category of items on Etsy:

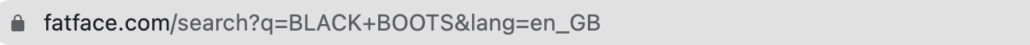

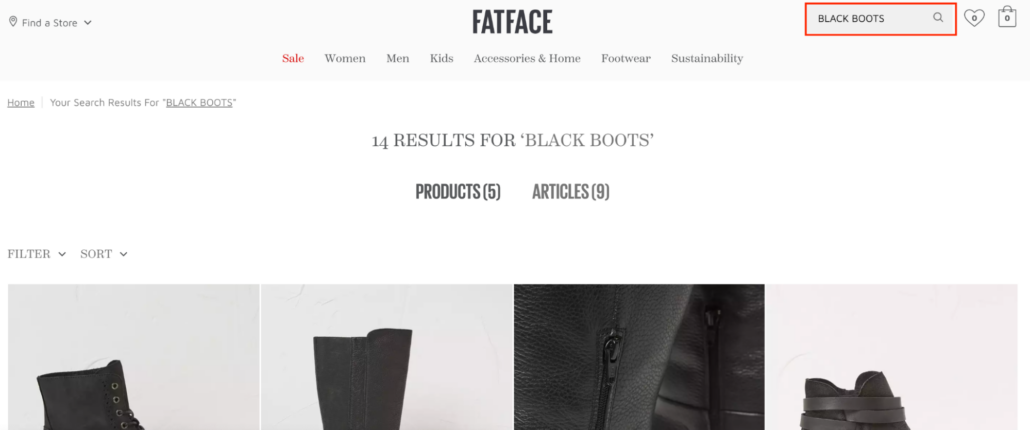

- Searching – used to find something on a site’s internal search. e.g. query=users-query, q=users-query or search=drop-down-option

- Translating – used to add the language code to the end of the URL parameter. e.g. lang=en_GB or language=pt

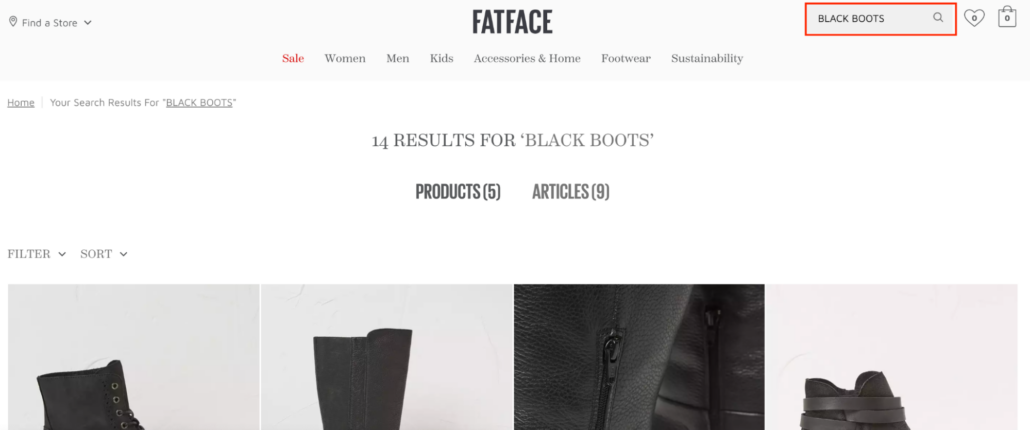

- Tracking – used for tracking the traffic from ads or a campaign. e.g. utm_medium=social or sessionid=123

SEO issues with URL parameters

- Create Duplicate Content

- Waste Crawl Budget

- Split Page Ranking Signals

- Make URLs Less Clickable

-

Duplicate content:

Source: Google Webmasters

Having multiple pages optimised for the same keyword can confuse Google, negatively impacting your website’s rankings.2. Waste Crawl Budget

As we can see, query strings can create multiple URLs that point to very similar content. Crawling these redundant pages will waste the crawl budget and reduce your site’s ability to index the most relevant pages, and increase server load. According to Google Webmasters: “Overly complex URLs, especially those containing multiple parameters, can cause problems for crawlers by creating unnecessarily high numbers of URLs that point to identical or similar content on your site. As a result, Googlebot may consume much more bandwidth than necessary, or maybe unable to completely index all the content on your site.”3. Split Page Ranking Signals

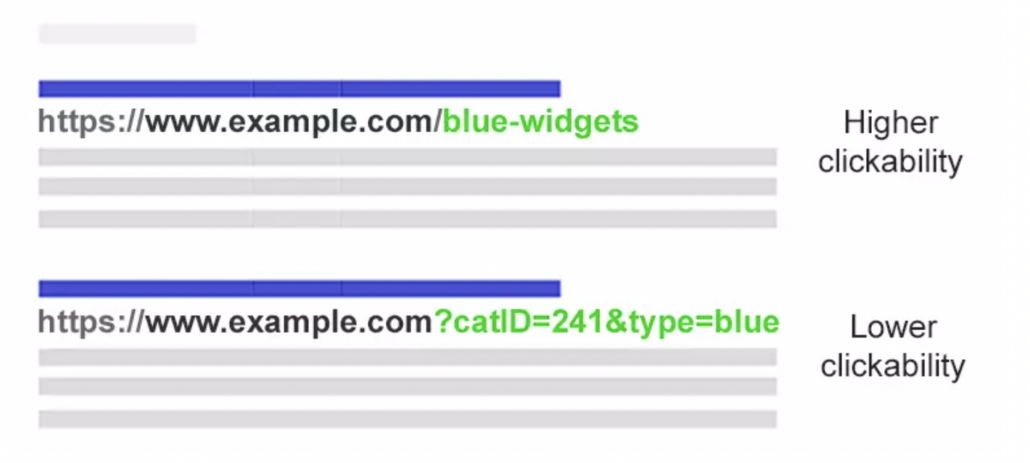

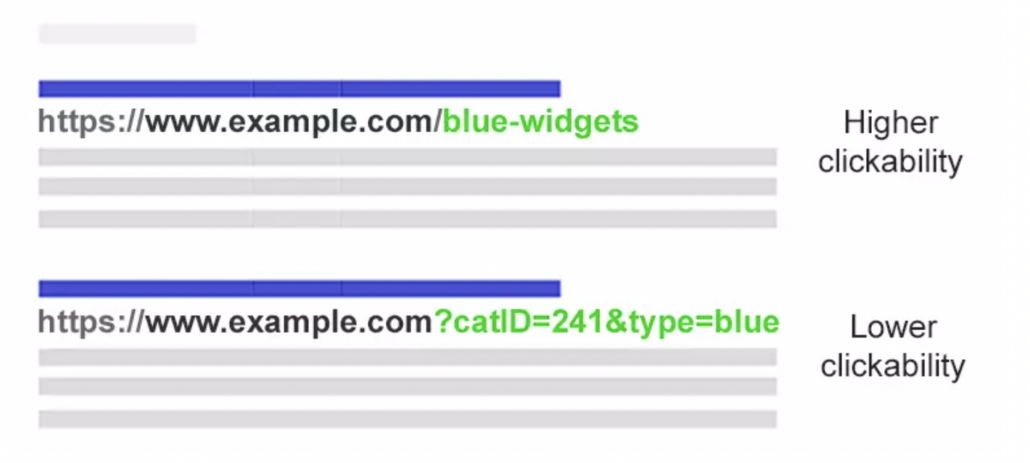

Multiple URLs pointing to the same content can dilute your ranking signals. For example, links and social shares might point to any reordered or filtered page instead of the original page. This can damage your ranking visibility since the crawlers will get confused and struggle to choose which competing pages to index for the search query.4. Make URLs Less Clickable

When URLs create multiple parameters and display them on the SERPs, they seem to be spammy and dishonest. As shown in the image below, the parameterised URL tends to be less clickable, and users share.

Source: Search Engine Journal

The SEO solutions for URLs parameters (with pros & cons)

Hopefully, there are some robust solutions to help you avoid the SEO issues caused by URL parameters. We’ll show you four tools that will help you to deal with URL parameters:- Rel=”Canonical” Link Attribute – This encourages search engines to consolidate the ranking signals to the URL specified as canonical.

- Meta Robots Noindex Tag – This tag will prevent search engines from indexing the page.

- Robots.txt Disallow – This prevents the search engines from crawling specific pages. When they see something disallowed, they won’t display those pages.

- Move From Dynamic to Static URLs – With this, you can use server-side URL rewriting to convert parameters into subfolder URLs.

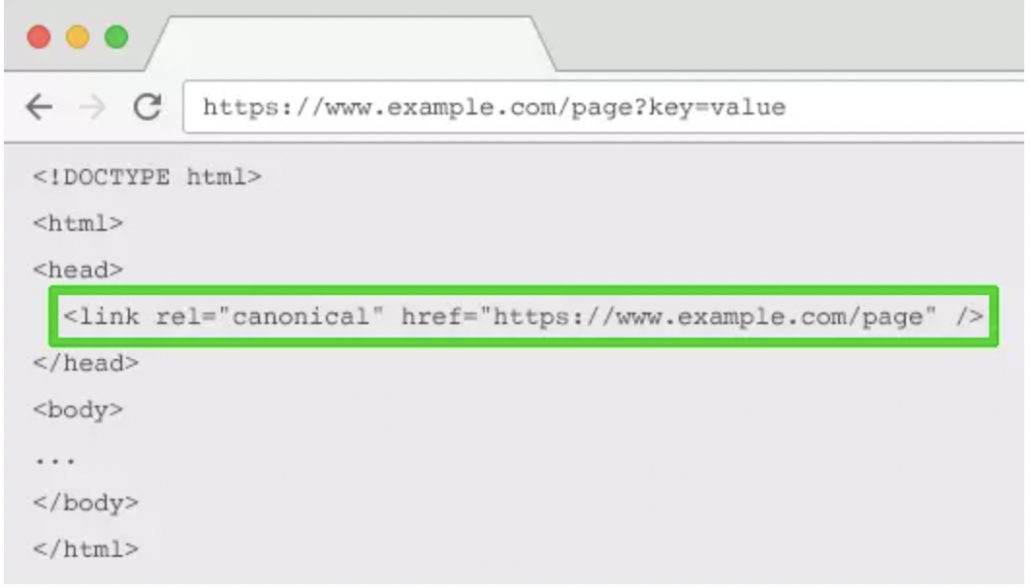

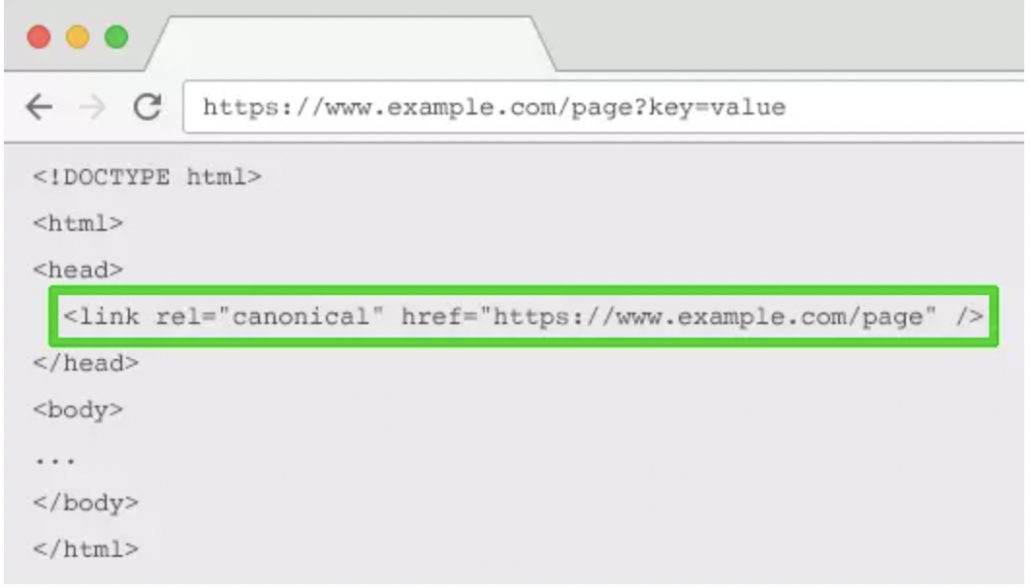

1. Rel=”Canonical” Link Attribute

This is a great tactic to allow the search engines to consolidate the ranking signals to the URL specified as canonical.

Source: Search Engine Journal

Before setting up a “canonical” link attribute, you’ll need to decide which static page should be indexed. The next step is to set up canonical tags on the parameterised URLs, referencing the original page. By adding the tag to the original product page, search engines will know which page you want them to display. For example, the first URL should be the static URL: /clothing/women-dresses/ /clothing/women-dresses?color=green /clothing/women-dresses?type=midi-dresses Pros of using Rel=”Canonical” tactic:- It helps to prevent duplicate content

- Consolidates ranking signals to the static URL

- Easy technical implementation

- Wastes crawl budget due to parameterised URLs

- This tactic is not suitable when the parameter page content is not close enough to the canonical. For example, pagination, searching, translating or some filtering parameters.

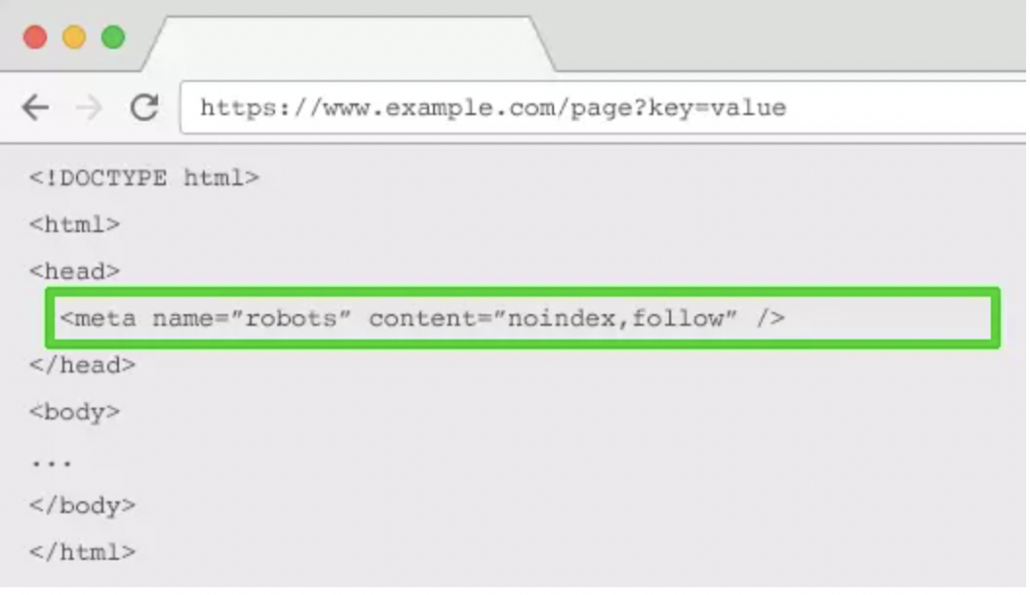

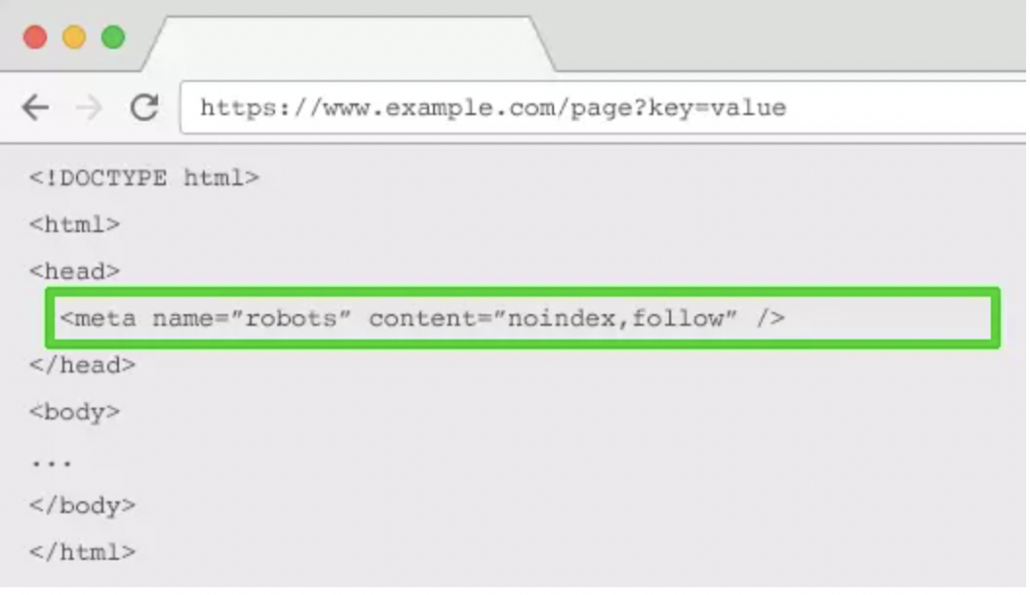

2. Meta Robots Noindex Tag

This tactic will prevent search engines from indexing the page. Adding a “no index” tag to a parameter page will be crawled less frequently. Note: This tactic won’t prevent search engines from crawling URLs but will encourage them to do so less frequently.

Source: Search Engine Journal

Pros of using the “noindex” tag tactic:- It helps to prevent duplicate content

- Suitable for all parameter types you do not wish to be indexed.

- Easy technical implementation.

- It won’t prevent search engines from crawling URLs (but will do so less frequently)

- Doesn’t consolidate ranking signals to the static URL.

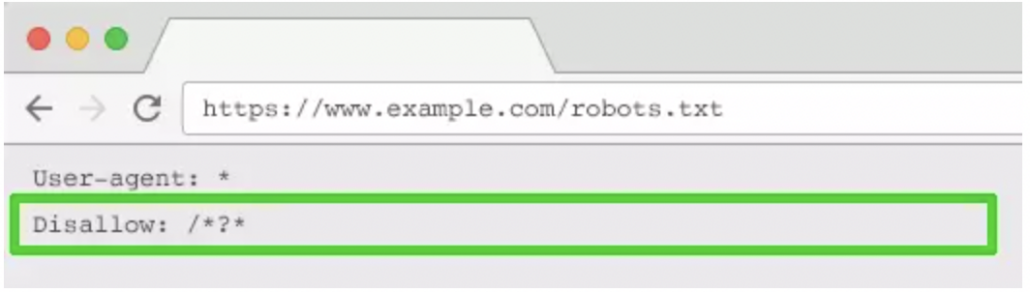

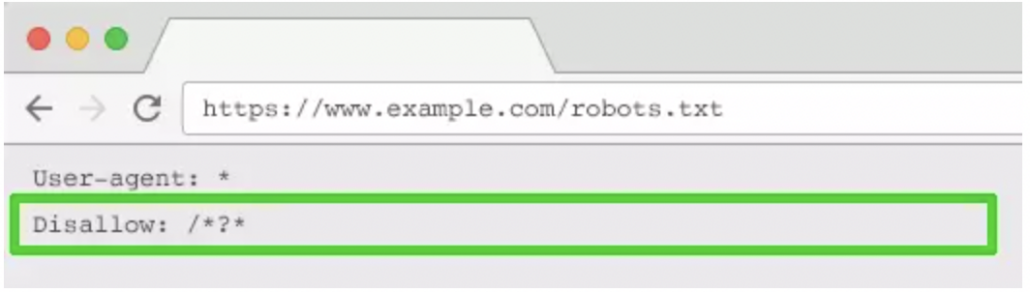

3. Robots.txt Disallow

Using the disallow tag will allow you to block crawlers from accessing specific pages of your website. The search engines will no longer be able to access pages that have a question mark (/*?*) in the URL as the robots.txt disallows it.

Source: Search Engine Journal

Note: Make sure no other section of your URL structure uses parameters; otherwise, search engines will block those URLs as well. Pros of using “disallow” tag tactic:- Doesn’t waste the crawl budget, so crawling becomes more efficient.

- Avoids duplicate content issues.

- Suitable for all parameter types you do not wish to crawl.

- The technique is simple to implement.

- Splits the page ranking signals and doesn’t consolidate to the relevant page

- Doesn’t remove existing URLs from the index.

4. Move URL Parameters to Static URLs

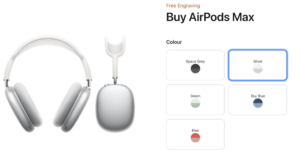

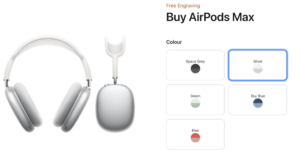

You can opt to use subfolders instead of parameters. Moving dynamic pages as static ones improve the URL structure of the website. This tactic works well to include descriptive keywords in your URLs, such as categories, products, or colours. For example, Apple is using static URLs. When you click in colour, it generates a static URL for each colour:

This approach works well with smaller e-commerce websites since you’ll need to produce unique content for each “filter” page. The combinations of filters from your navigation often results in thin content issues, especially if you offer multi-select filters.

In short, if you want your parameters to be indexed, use static URL paths. However, we suggest that not relevant parameters be implemented as query strings. (Example, tracking, reordering or paginating parameters)

This approach works well with smaller e-commerce websites since you’ll need to produce unique content for each “filter” page. The combinations of filters from your navigation often results in thin content issues, especially if you offer multi-select filters.

In short, if you want your parameters to be indexed, use static URL paths. However, we suggest that not relevant parameters be implemented as query strings. (Example, tracking, reordering or paginating parameters)

Pros of using static URLs tactic:

- Static URLs have a higher likelihood to rank (only relevant keyword elements)

Cons of using static URLs tactic:

- Significant investment of development time for URL rewrites and producing unique content

- May lead to duplicate and thin content issues.

- Doesn’t consolidate ranking signals.

- An indexable URL filter offers no SEO value, so it’s not suitable for all parameter types.

How to decide which SEO tactics to implement?

Deciding on what to implement will depend on your website’s priorities. So we recommend walking through this mind-mapping process and ask yourself these questions:- What are our business priorities?

- What search queries do consumers use before they get to your site?

- Is crawling efficiency more important than consolidating authority signals?

- Do we have enough resources to produce unique content for product variations?

Source: Search Engine Journal

The Best SEO Practices for Colour Variations

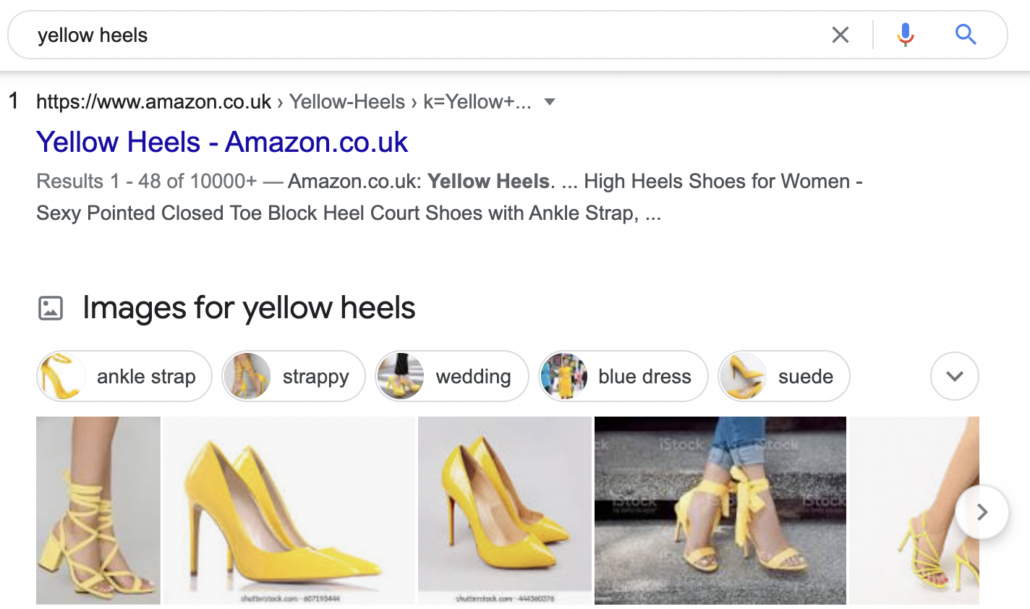

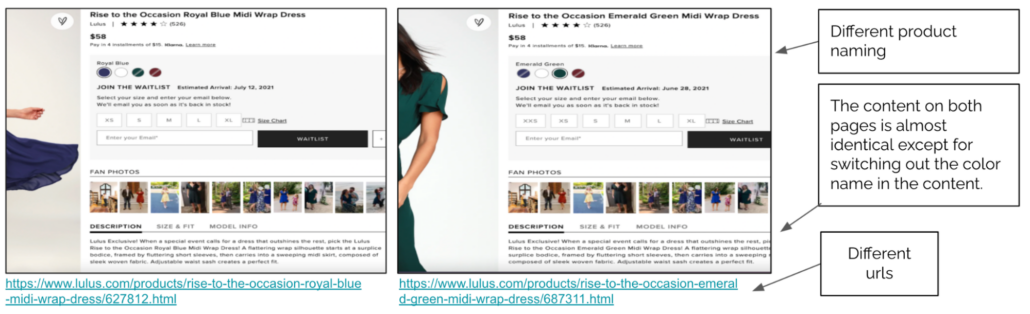

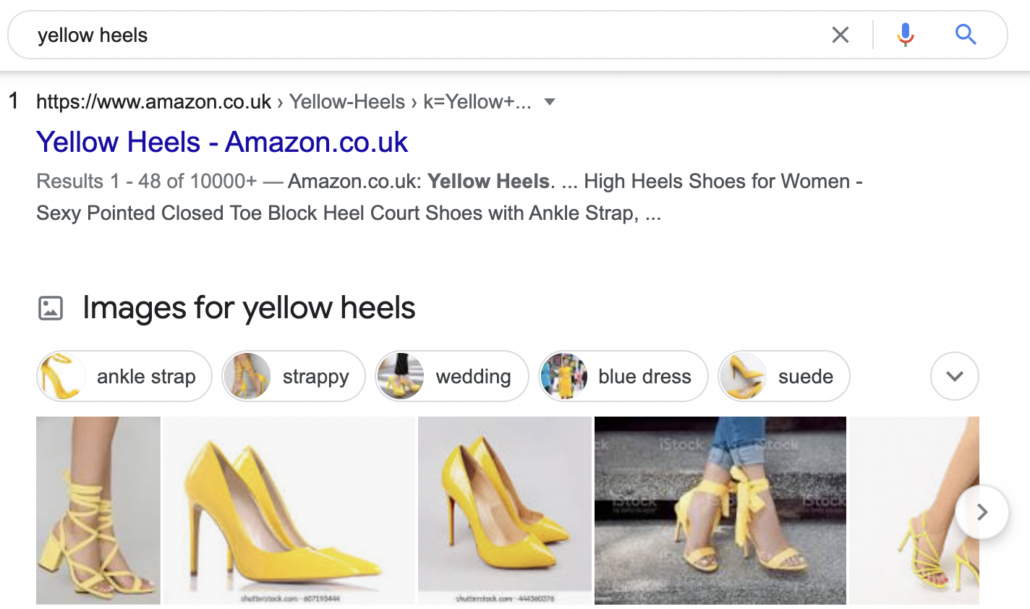

Now that you’re more familiar with the definition of URL parameters, the main issues and solutions, it is time to decide the best strategy for product colour variations. But, how can you know what’s the best strategy for your store? Unfortunately, there isn’t an easy answer for this. When it comes to deciding the best colour variation strategy for your business, Jenny Halasz wrote an excellent article. She tells us some things that you should focus on when determining what strategy works for you.- Usability – This is important for situations where users are “colour-centric”. For example, if a user searches for a pair of shoes to match an outfit, they’ll likely search the product with the colour, like [yellow heels].

So, if a specific colour variation is essential to the user’s intent, it makes sense to create a different URL for each colour.

So, if a specific colour variation is essential to the user’s intent, it makes sense to create a different URL for each colour.

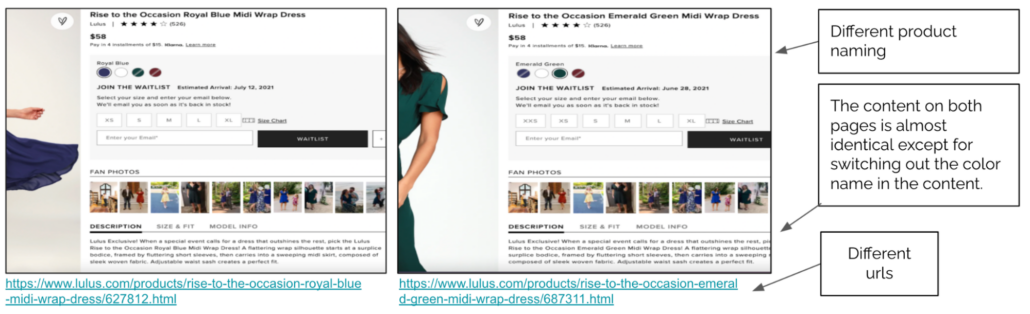

- Duplication of Effort – The next step is how much effort it would take to write unique content for each colour. Also, you should think if you’ll have enough return on that investment of time and resources.

- Do you want all the colour variations to rank well?

- Do the colour variations have enough search volume?

- Do you have enough resources to create unique content?

Think about all the components discussed in this post, and choose the strategy that works best for your eCommerce store. This graph’s a great resource when it comes to deciding whether you need your product variation on a separate URL.

Think about all the components discussed in this post, and choose the strategy that works best for your eCommerce store. This graph’s a great resource when it comes to deciding whether you need your product variation on a separate URL.

Source: MarketingSyrup

Resources:- https://www.searchenginejournal.com/seo-guide-to-ecommerce/162353/#close

- https://www.searchenginejournal.com/technical-seo/url-parameter-handling/

- https://www.searchenginejournal.com/seo-best-practices-for-color-variations/265323/

- https://www.semrush.com/blog/url-parameters/

- https://www.semrush.com/blog/on-page-seo-basics-urls/